What’s the point of baby talk?

How caregivers instinctively simplify their speech for children—and how it helps

There is no empirical evidence suggesting that baby talk in any way hinders child language development—quite the opposite, in fact.

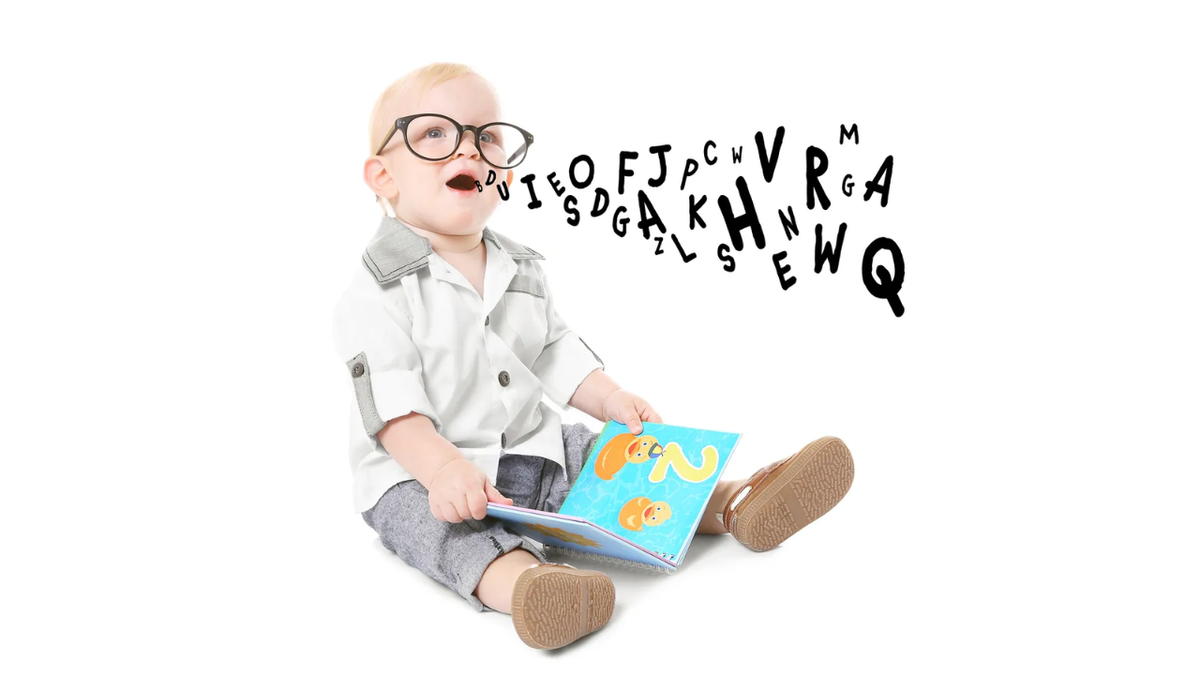

What exactly is baby talk and how is it different from the way adults regularly speak? More importantly, why do adults use it with children?

In this second issue of my special series on the science of baby talk, we’ll look at all the surprisingly sophisticated strategies and tactics that parents use to make their speech more accessible to their children.

ℹ️ Articles in this Series

- Part 1: Why you should be talking to your infant

- Part 2: What’s the point of baby talk? (this issue)

- Part 3: Is baby talk good for your child?

- Part 4: Do all cultures use baby talk?

- Part 5: Baby talk in the languages of the world (forthcoming)

- Part 6: How much should you talk to your child?

- Part 7: What really matters when talking to your child

The technical term for baby talk is infant-directed speech (IDS) or child-directed speech (CDS), depending on the focus (younger children or children of any age). Baby talk is a way of speaking used specifically during the process of socializing young children—teaching them how to be competent members of society, which includes understanding how to use the language of the community. This makes baby talk a special register—a way of speaking associated with specific social situations or specialized activities of particular groups. Baby talk used to be called motherese, but scholars now eschew this term to avoid gender stereotyping. More recently, as researchers have realized the important role that caregivers other than parents often play in child language development, the term caregiver speech has emerged as a more broadly-encompassing expression.

In this and the other articles in this series I’ll simply use baby talk unless I want to be more specific.

Some people also distinguish between baby talk and parentese, the idea being that baby talk uses cutesy, shortened, or nonsense words (nana for banana, num-num for food) whereas parentese doesn’t. Those who make this distinction typically claim that baby talk is bad for your child but parentese is good for them. They assert that using nonsense words and incorrect grammar with children hinders their language development. This idea is, to put it frankly, total bullshit. There is no empirical evidence suggesting that baby talk in any way hinders child language development—quite the opposite, in fact, and we’ll look at the cool ways that baby talk actually abets your child’s language learning in the following issues in this series. The idea that baby talk is harmful for your child’s language development is simply a holdover from the stigma against any type of infant-directed speech in general (and probably also due to the fact that many adults find it annoying to listen to).

In reality, using simplified pronunciations and grammatical structures may actually do a better job of meeting infants where they’re at in their language-learning journey: children progress from extremely simple syllable structures and grammatical structures to more complex ones over time (e.g. only consonant-vowel (CV) syllables [Chee & Henke 2023: 752], and only 2-word combinations with no function words). (You can listen to a timelapse of this happening with a single child in this fantastic TED Talk.) Consider, for example, some ways that infants simplify words early in their language acquisition process (Ibbotson 2022: 145–146):

- reduplication: the complete or partial repetition of a syllable, replacing other syllables

- bottle → bobo

- dummy → dudu

- consonant harmony: adjusting the consonants in a word so that they are more similar to (or exactly match) other consonants in the word

- cat → tat

- dog → dod

- context-sensitive voicing: voicing all the consonants in certain positions, such as at the start of words

- pat → bat

- pig → big

- final devoicing: devoicing all consonants at the ends of words

- pad → pat

- bag → bak

- fronting: articulating sounds further forward in the mouth

- car → tar

- goat → doat

- pig → pid

- final consonant deletion: deleting the consonant at the end of a word or syllable(Notice how this change results in CV syllables.)

- dog → do

- mum → mu

- cluster reduction: reducing or simplifying consonant clusters to a single consonant

- plane → pane

- flag → fag

- spoon → poon

- splash → plash

- weak syllable deletion: dropping the unstressed syllable entirely

- banana → nana

- stopping: pronouncing fricatives as stops (plosives)

- van → pan

- jump → dump

- sun → tun

- that → dat

- gliding: pronouncing liquid consonants as glides, so that /r/ → /w/ and /l/ → /j, w/

- red → wed

- yellow → yeyow

- metathesis: switching the order of sounds

- elephant → efalent

English is hardly alone in this. Native American children learning a variety of indigenous languages have also been reported to change, replace, simplify, or delete syllables, as shown in the table below (Chee & Henke 2023: 753).

| Language | Child Speech | Target Form | Meaning |

|---|---|---|---|

| Algonquin | kakac | ki kakaciki | ‘dirt, filth, uncleanliness’ |

| Quileute | ā’ā’ | kā’ayo’ | ‘crow’ |

| Hopi | kwaʔa | ikwaʔa | ‘my grandfather’ |

| Dakota | kóka | ŝũk’ã́ | ‘horse’ |

| Navajo | kaya | ayą́ | ‘he is eating’ |

| Navajo | zhiní | jiní | ‘one said’ |

Reduplication is also quite common in child speech around the world. Here are examples from several Native American languages (Chee & Henke 2023: 753):

| Language | Child Speech | Target Form | Meaning |

|---|---|---|---|

| Mohawk | tata | kanà:taro | ‘bread’ |

| Zuni | we’we | wa’tsita | ‘dog’ |

| Quileute | dī’di’ | yi’sdak’ | ‘clothes’ |

| Hopi | táta | itáʔa | ‘my father’ |

| Comanche | ʔeroró·ʔ | táivo·ʔ | ‘white man’ |

| Cocopah | vánván | xasánʸ | ‘little girl’ |

| Inuktitut | piupuu | piu- | ‘be nice’ |

| East Cree | kiikii | aahkuhiiwaau | ‘it causes hurt’ |

These words sound a lot like baby talk, don’t they? It’s no coincidence that caregivers make phonotactic simplifications when talking to infants, because baby talk is really about linguistic accommodation—meeting children where they’re at in their language development by making communication as clear and understandable as possible. Parents naturally and intuitively adjust their speech to their child’s linguistic capabilities and preferences as the child learns (Kitamura & Lam 2009). They’ll often use the same pronunciations in baby talk that children are capable of producing themselves. As children master increasingly more sophisticated sounds and sound combinations, parents gradually raise their expectations of their children’s phonetic abilities, and normalize their own pronunciations in baby talk—all without even realizing they’re doing it! As another example of this linguistic accommodation, IDS tends to be more emotional at three months, more approving at six months, and more directive (”yes, look at the doggie”) at nine months (Kitamura & Burnham 2003). All this accommodation makes it easier for the child to sift through the enormous amount of linguistic input they receive and generalize grammatical patterns from it.

Why do people use baby talk?

Parents naturally and intuitively adjust their speech to their child’s linguistic capabilities and preferences as the child learns—all without even realizing they're doing it!

When speaking to young children, one problem that caregivers have to solve is getting children to realize that speech is being directed towards them in the first place. These attention-getting (and holding) devices are a major factor shaping baby talk (Dawson, Hernandez & Shain 2022: 351). The most salient tactic that caregivers use to capture and retain young children’s attention is exaggerated prosody—higher pitch and a broader pitch range (Soderstrom et al. 2008).

Prosody is the set of phonetic and phonological cues that speakers use to structure their speech (Hieber 2016). It includes things like intonation, rhythm and timing, voice quality, pauses—anything that helps signal where one stretch of speech (syllables, words, clauses, etc.) ends and another begins, and how those sections of speech relate to one another. For example, a recent study shows that consonants at the beginnings of words are systematically lengthened across languages (Blum et al. 2024), which helps listeners determine where one word ends and the next begins.

In baby talk, adults generally use a wider pitch range and higher overall pitch—“great swooping curves of sound over an extended pitch range” (Saxton 2017: 88; Clark 2024: 40). Their speech tends to be slower, with lengthened syllables, longer pauses, and fewer disfluencies (hesitations and restarts) (Saxton 2017: 88). Baby-talking adults also tend to place object words at the ends of sentences and pronounce them fairly loudly, thus giving them special prominence (Messer 1981). Very young children especially are strongly attracted to this type of exaggerated prosody, so it serves as an excellent attention-getting device. At the same time, you can see how exaggerating these types of cues would be useful for infants trying to figure out how their language works.

Deaf parents of deaf children have additional attention-getting devices at their disposal: signs are often made in the visual field of the child rather than in their typical position near the signer. The two-handed ASL sign RABBIT, for example, is normally signed with both hands on either side of the head, but can be articulated in the child’s visual field, such as close to a picture of a rabbit in a book, or on the head of the child (Baker et al. 2016: 56). Deaf adults make sure that they sign to their deaf child when the child is actually looking at them, but they can also gain the child’s attention by tapping the child or waving their hand in the child’s visual field.

Another strategy for retaining a child’s attention is by attending to things that the child is already paying attention to. Adults generally follow up on topics introduced by children (whether verbally or whether with their gaze, pointing, or other vocalizations), so much so that children set the agenda much more often than not. One study found that a particular child introduced about 20 new topics per hour, whereas her mother only introduced 5. And the mother followed up on the topics her daughter introduced (Moerk 1983). This responsiveness has been directly linked to later vocabulary size, but only when the response is linked to the child’s vocalization or babble (Clark 2024: 49).

Another factor that plays a strong influence on baby talk is topic choice—adults choose topics that maximize the likelihood that the child will understand what is being said. This results in a smaller range of words (Ibbotson 2022: 57), and words that focus on the here-and-now, rather than concepts removed in time or space. CDS thus includes a higher frequency of concrete terms like cup, juice, and tree than abstract concepts like kindness, beauty, and justice (Saxton 2017: 89). Five topics in particular dominate infant-directed speech (Ferguson 1977; Ferguson & Debose 1977; Dawson, Hernandez & Shain 2022: 351):

- members of the family: mommy, daddy

- animals, games, and toys: peek-a-boo, choo-choo ‘train’

- parts of the body / bodily functions and routines: wee-wee ‘urinate’, night-night ‘go to sleep’

- food

- clothing

This trend holds across spoken and signed languages. Work with the Comanche, East Cree, Nuuchahnulth, and Twana languages, for instance, has found that common categories for specialized CDS vocabulary include kinship terms, body parts and bodily functions, and everyday actions, animals, and objects (Chee & Henke 2023: 748), and work with sign languages shows that the most frequently-discussed semantic domains are the same ones listed above (Fenson et al. 1994).

Linguist Madeline Beekman talks more about the functions of baby talk on her Substack:

What are the features of baby talk?

Caregivers continuously try to determine their infant’s state of knowledge—a skill called theory of mind—and knowingly or unknowingly adjust their speech to accommodate that state of knowledge.

Let’s look at some other ways caregivers adjust their speech to infants. Not all caregivers engage in all of these strategies, and the details vary by individual and by culture (more on that in Part 4 of this series), but these are recurring patterns observed by child language acquisition researchers.

Generally speaking, baby talk is characterized by shorter sentences, simpler words, more repetition, and slower speech. This is true for both spoken and signed languages: adults articulate their signs more slowly with young children, making it easier for the child to see their signs (Baker et al. 2016: 56). In spoken language, individual sounds are pronounced more clearly than in adult-directed speech (ADS) (Dilley et al. 2014), whether vowels (Burnham, Kitamura & Vollmer-Conna 2002) or consonants (Cristià 2010). Sounds are also more evenly timed in IDS than ADS (Payne et al. 2010). In normal adult speech, vowels and consonants are pronounced longer or shorter depending on the sounds around them; in IDS, by contrast, vowels and consonants are given more similar lengths.

Caregivers also use vocabulary unique to the CDS register, such as tum-tum ‘tummy’, din-din ‘dinner’, and go bye-bye ‘leave’. Usually this child-directed vocabulary is based on words in the adult lexicon, but with simplification, sound substitution, or other sound changes. Here are similar examples from the Nuuchahnulth language (Kess & Kess 1986: 209):

| ADS Form | CDS Form | Meaning |

|---|---|---|

| tu·xʷši | tu·xʷ | ‘jump!’ |

| tí·qši | tí·q | ‘sit down!’ |

| ta·qyiči | ta·q | ‘stand up!’ |

| ya·cši | ya·c | ‘walk!’ |

However, the CDS register can include words that are completely unrelated to their ADS counterparts, such as how the word boo-boo isn’t related to any word like injury or wound. Here are similar cases from Nuuchahnulth, where the CDS form is unrelated to the ADS form (Kess & Kess 1986: 209):

| ADS Form | CDS Form | Meaning |

|---|---|---|

| naqši | ma·h | ‘drink!’ |

| haʔukʷ’i | pa·paš | ‘eat!’ |

| waʔičuʔi | hu·š | ‘go to sleep!’ |

| hu· | ʔi·x | ‘watch out!’ |

Words used in CDS are also:

- short, containing fewer sounds (phonemes) on average than words used with adults

- higher frequency, i.e. words that adults use the most often in everyday speech

- more predictable, in that they contain very common sound sequences, e.g. the sequence /ði/ in the word the is a much more common sequence than the reverse, /ið/, as in seethe, teethe, or wreathe.

- more similar to each other, meaning that there are a higher number of words that sound similar to any given word

This last feature results in a large number of pairs of words that differ only by a single sound, e.g. pat vs. bat, called minimal pairs. Such minimal pairs are extremely useful for children learning to differentiate sounds in a language.

In terms of syntax, words are also more likely to be delivered in isolation in IDS (Ibbotson 2022: 57), and there are fewer instances of both negation and complex clauses (Sachs, Brown & Salerno 1976). Parents often leave out function words and grammatical endings (Dawson, Hernandez & Shain 2022: 353), and use entire noun phrases instead of pronouns. Because pronouns stand in for a noun phrase and/or refer back to them, understanding them requires the ability to track topics over the course of a conversation or text, making them difficult for children to master.

Subjects of sentences in IDS have a strong tendency to be agents (the person doing the action) (Rondal & Cession 1990), even though this is just one of the very many functions that subjects have in English. For example, the subject of a passive sentence is a patient (person affected by the action) rather than an agent, and the subject of a meteorological verb like to rain is a meaningless dummy subject it (an expletive or pleonastic subject), e.g. it is raining. In fact, the notion of a grammatical subject is so complex that children don’t demonstrate full control over the category until age 9! (Braine et al. 1993) Limiting the use of subjects to just agents when children are young helps ease infants into grasping this multifaceted construction.

Deaf caregivers have another means of simplifying the syntax of baby talk. In sign languages, facial expressions, mouth movements, or body movements can also be part of the grammar or lexicon of the language. For instance, it is common for sign languages to use facial expressions like raised eyebrows to mark questions (much like many spoken languages use rising intonation to mark questions). Such gestures can also be part of the form of individual signs. For example, the sign for SEARCH in Sign Language of the Netherlands is produced with the eyebrows lowered, and the sign for BE-PRESENT in British Sign Language and Sign Language of the Netherlands is produced with a mouth movement sh, even though that sound is not part of either the corresponding Dutch or English word (Baker et al. 2016: 4). Signers would be surprised or confused about these signs if they did not include these facial gestures.

This group of linguistic gestures and facial expressions made in addition to hand movements are called non-manual features/components/gestures. They can be difficult for a child to learn because the face is also used to display emotion, so the child has to learn to distinguish when a facial expression or gesture is being used linguistically versus emotively / gesturally. So it should come as no surprise that one of the ways deaf mothers accommodate their child’s learning process is by avoiding the use of non-manual markers in a grammatical way until children are between 2;0 and 2;6 (Baker et al. 2016: 60).

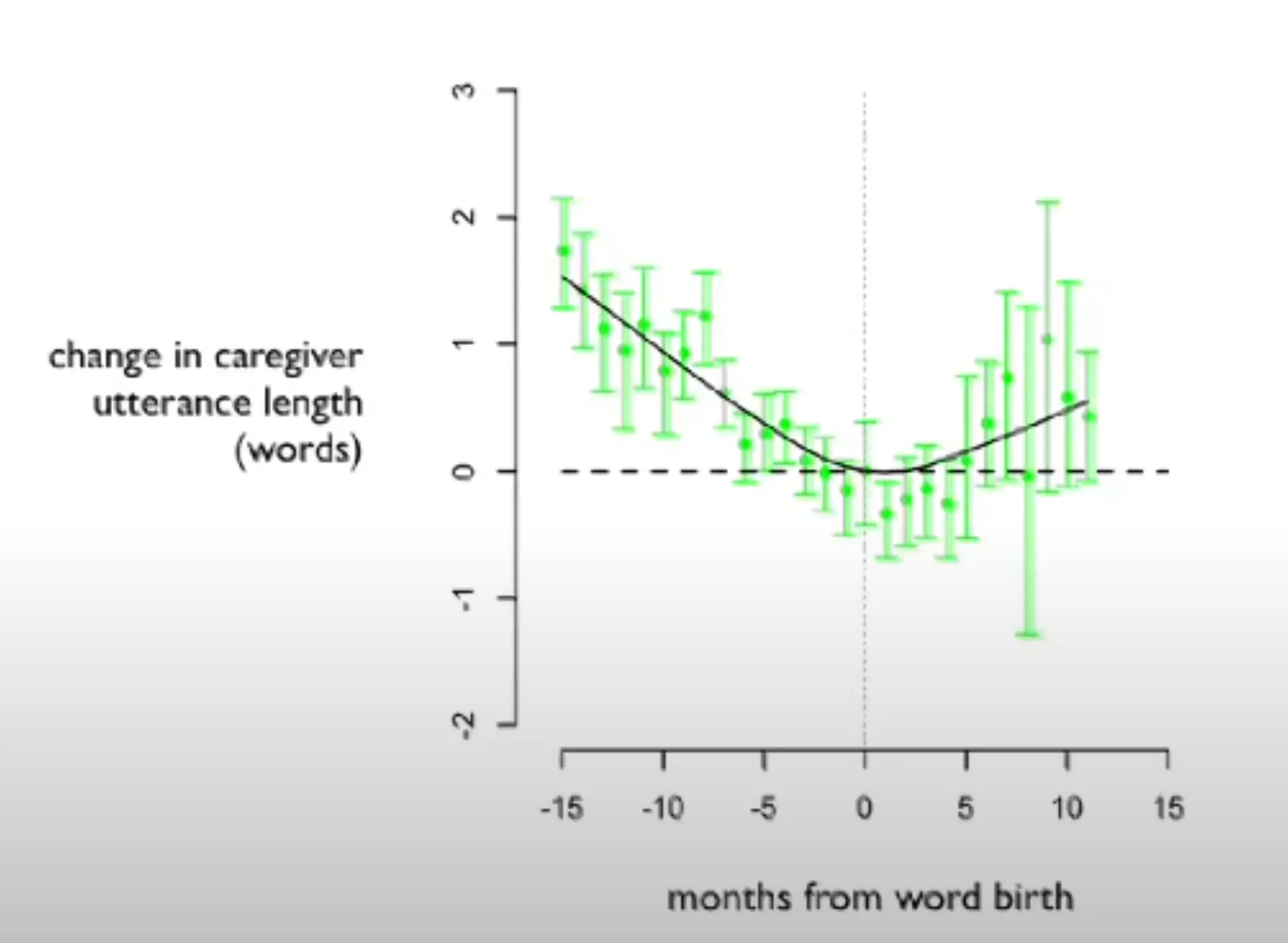

years;months, so that “3;9” means ‘3 years, 9 months old’.Other ways that adults assist children in language learning are even more subtle. In his brilliant TED Talk summarizing his equally brilliant research, Deb Roy shows how anytime his son started using a new word, it coincided with him and his wife subconsciously shortening any utterances containing that word when they spoke to their son (Roy et al. 2015). This unintentionally gave their son focused, targeted practice with that word.

Finally, CDS utterances are also remarkably well-formed. Normal adult conversation is riddled with errors and restarts. We tend not to notice these errors because they’re a natural part of conversation, but if you look at the raw transcripts of conversations, you quickly realize how remarkable it is we’re ever able to make sense of anything we say to each other. But IDS is almost completely devoid of both disfluencies (false starts, mispronunciations, hesitations) and grammatical errors. The table below shows just how few disfluencies occurred, on average, per 100 words in CDS vs. ADS (Broen 1972: 11). Another survey found just one grammatical error in a corpus of 1,500 utterances (Newport, Gleitman & Gleitman 1977).

| Addressee (Age) | Free Play | Storytelling | Conversation |

|---|---|---|---|

| 2;3–3;5 | 0.58 | 0.66 | — |

| 3;10–5;10 | 1.61 | 0.77 | — |

| Adults | — | — | 4.70 |

Disfluencies per 100 words in child-directed speech vs. adult-directed speech (Broen 1972: 11)

Taken together, it is clear that the modifications adults make in IDS are done for the benefit of the child’s language development. Caregivers continuously try to determine their infant’s state of knowledge—a skill called theory of mind—and knowingly or unknowingly adjust their speech to accommodate that state of knowledge.

Yet even though the intent (conscious or subconscious) of baby talk is to facilitate child language development, this doesn’t mean it’s necessary—or even useful. All developmentally normal children follow roughly the same stages of language development regardless of what percentage of the speech they hear is baby talk (although these stages vary by language, and the exact timing varies by individual). So what’s the point of baby talk? I’ve already hinted at its potential benefits in this series, but in the next issue of this series we’ll look more extensively at the evidence that IDS actually improves child language acquisition. Be sure to subscribe to receive the rest of the issues in the series!

ℹ️ Articles in this Series

- Part 1: Why you should be talking to your infant

- Part 2: What’s the point of baby talk? (this issue)

- Part 3: Is baby talk good for your child?

- Part 4: Do all cultures use baby talk?

- Part 5: Baby talk in the languages of the world (forthcoming)

- Part 6: How much should you talk to your child?

- Part 7: What really matters when talking to your child

📚 Recommended Reading

“The birth of a word”

MIT researcher Deb Roy wanted to understand how his infant son learned language—so he wired up his house with video cameras to catch every moment (with exceptions) of his son's life, then parsed 90,000 hours of home video to watch gaaaa slowly turn into water. Astonishing, data-rich research with deep implications for how we learn.

HELLO Lab Presents

The Hearing Experience & Language Learning Outcomes (HELLO) Lab at the University of Connecticut has a great series of YouTube Videos about child language acquisition for parents.

How babies talk: The magic and mystery of language in the first three years of life

📑 References

- Baker, Anne, Beppie Van Den Bogaerde, Roland Pfau & Trude Schermer (eds.). 2016. The linguistics of sign languages: An introduction. John Benjamins. https://doi.org/10.1075/z.199.

- Blum, Frederic, Ludger Paschen, Robert Forkel, Susanne Fuchs & Frank Seifart. 2024. Consonant lengthening marks the beginning of words across a diverse sample of languages. Nature Human Behaviour 8(11). 2127–2138. https://doi.org/10.1038/s41562-024-01988-4.

- Braine, Martin D.S., Patricia J. Brooks, Nelson Cowan, Mark C. Samuels & Catherine Tamis-LeMonda. 1993. The development of categories at the semantics/syntax interface. Cognitive Development 8(4). 465–494. https://doi.org/10.1016/S0885-2014(05)80005-X.

- Broen, Patricia Ann. 1972. The verbal environment of the language-learning child (ASHA Monographs 17).

- Burnham, Denis, Christine Kitamura & Uté Vollmer-Conna. 2002. What’s new, pussycat? On talking to babies and animals. Science 296(5572). 1435–1435. https://doi.org/10.1126/science.1069587.

- Chee, Melvatha R. & Ryan E. Henke. 2023. Child and child-directed speech in North American languages. In Carmen Dagostino, Marianne Mithun & Keren Rice (eds.), The Languages and Linguistics of Indigenous North America (World of Linguistics 13.2), vol. 2, 741–766. De Gruyter. https://doi.org/10.1515/9783110712742-033.

- Clark, Eve V. 2024. First language acquisition. 4th edn. Cambridge University Press. https://doi.org/10.1017/9781009294485.

- Cristià, Alejandrina. 2010. Phonetic enhancement of sibilants in infant-directed speech. The Journal of the Acoustical Society of America 128(1). 424–434. https://doi.org/10.1121/1.3436529.

- Dawson, Hope, Antonio Hernandez & Cory Shain (eds.). 2022. Language files: Materials for an introduction to language and linguistics. 13th edn. The Ohio State University Press.

- Dilley, Laura C., Amanda L. Millett, J. Devin Mcauley & Tonya R. Bergeson. 2014. Phonetic variation in consonants in infant-directed and adult-directed speech: the case of regressive place assimilation in word-final alveolar stops. Journal of Child Language 41(1). 155–175. https://doi.org/10.1017/S0305000912000670.

- Fenson, Larry, Philip S. Dale, J. Steven Reznick, Elizabeth Bates, Donna J. Thal & Stephen J. Pethick. 1994. Variability in early communicative development (Monographs of the Society for Research in Child Development 242). Vol. 59. https://doi.org/10.2307/1166093.

- Ferguson, Charles A. 1977. Baby talk as a simplified register. In Catherine E. Snow & Charles A. Ferguson (eds.), Talking to children: language input and acquisition. Cambridge University Press.

- Ferguson, Charles A. & C. E. Debose. 1977. Simplified registers, broken language, and pidginization. In A. Valdman (ed.), Pidgin and creole linguistics. Indiana University Press.

- Fernald, Anne, Traute Taeschner, Judy Dunn, Mechthild Papousek, Bénédicte De Boysson-Bardies & Ikuko Fukui. 1989. A cross-language study of prosodic modifications in mothers’ and fathers’ speech to preverbal infants. Journal of Child Language 16(3). 477–501. https://doi.org/10.1017/S0305000900010679.

- Hieber, Daniel W. 2016. The cohesive function of prosody in Ékegusií (Kisii) narratives: A functional-typological approach. University of California, Santa Barbara M.A. thesis. http://rgdoi.net/10.13140/RG.2.2.17818.18886. (22 August, 2025).

- Ibbotson, Paul. 2022. Language acquisition: The basics (The Basics Series). Routledge. https://doi.org/10.4324/9781003156536.

- Jones, Gary, Francesco Cabiddu, Doug J. K. Barrett, Antonio Castro & Bethany Lee. 2023. How the characteristics of words in child-directed speech differ from adult-directed speech to influence children’s productive vocabularies. First Language 43(3). 253–282. https://doi.org/10.1177/01427237221150070.

- Kess, Joseph Francis & Anita Copeland Kess. 1986. On Nootka baby talk. International Journal of American Linguistics 52(3). 201–211. https://doi.org/10.1086/466018.

- Kitamura, Christine & Denis Burnham. 2003. Pitch and communicative intent in mother’s speech: adjustments for age and sex in the first year. Infancy 4(1). 85–110. https://doi.org/10.1207/S15327078IN0401_5.

- Kitamura, Christine & Christa Lam. 2009. Age‐specific preferences for infant‐directed affective intent. Infancy 14(1). 77–100. https://doi.org/10.1080/15250000802569777.

- Moerk, Ernst L. 1983. The mother of Eve—as a first language teacher (Monographs on Infancy). Ablex.

- Newport, Elissa L., Henry Gleitman & Lila R. Gleitman. 1977. Mother, I’d rather do it myself: Some effects and non-effects of maternal speech style. In Catherine E. Snow & Charles A. Ferguson (eds.), Talking to children: language input and acquisition, 101–149. Cambridge University Press.

- Payne, Elinor, Brechtje Post, Lluïsa Astruc, Pilar Prieto & Maria del Mar Vanrell. 2010. Rhythmic modification in child directed speech. In Michela Russo (ed.), Prosodic universals: Comparative studies in rhythmic modeling and rhythm typology, 147–184. Aracne Editrice.

- Rondal, Jean A. & Anne Cession. 1990. Input evidence regarding the semantic bootstrapping hypothesis. Journal of Child Language 17(3). 711–717. https://doi.org/10.1017/S0305000900010965.

- Roy, Brandon C., Michael C. Frank, Philip DeCamp, Matthew Miller & Deb Roy. 2015. Predicting the birth of a spoken word. Proceedings of the National Academy of Sciences 112(41). 12663–12668. https://doi.org/10.1073/pnas.1419773112.

- Sachs, J., R. Brown & R. Salerno. 1976. Adult’s speech to children. In W. von Raffler Engel & Y. Lebrun (eds.), Baby talk and infant speech (Neurolinguistics 5). Peter de Ridder Press.

- Saxton, Matthew. 2017. Child language: Acquisition and development. 2nd edn. SAGE.

- Soderstrom, Melanie. 2007. Beyond babytalk: Re-evaluating the nature and content of speech input to preverbal infants. Developmental Review 27(4). 501–532. https://doi.org/10.1016/j.dr.2007.06.002.

- Wegdell, Franziska, Caroline Fryns, Johanna Schick, Lara Nellissen, Marion Laporte, Martin Surbeck, Maria A. Van Noordwijk, et al. 2025. The evolution of infant-directed communication: Comparing vocal input across all great apes. Science Advances 11(26). eadt7718. https://doi.org/10.1126/sciadv.adt7718.

If you’d like to support Linguistic Discovery, purchasing through these links is a great way to do so! I greatly appreciate your support!

Check out my Amazon storefront here.

Check out my Bookshop storefront here.